The Federal Trade Commission recently released data indicating that social media platforms were the point of origin for nearly 30% of all reported scam losses in the United States during 2025. With total losses reaching $2.1 billion, these figures provide a sobering look at the intersection of digital connectivity and organized criminal activity. Investment scams accounted for $1.1 billion of this total, while shopping and romance scams rounded out the most prevalent categories of exploitation.

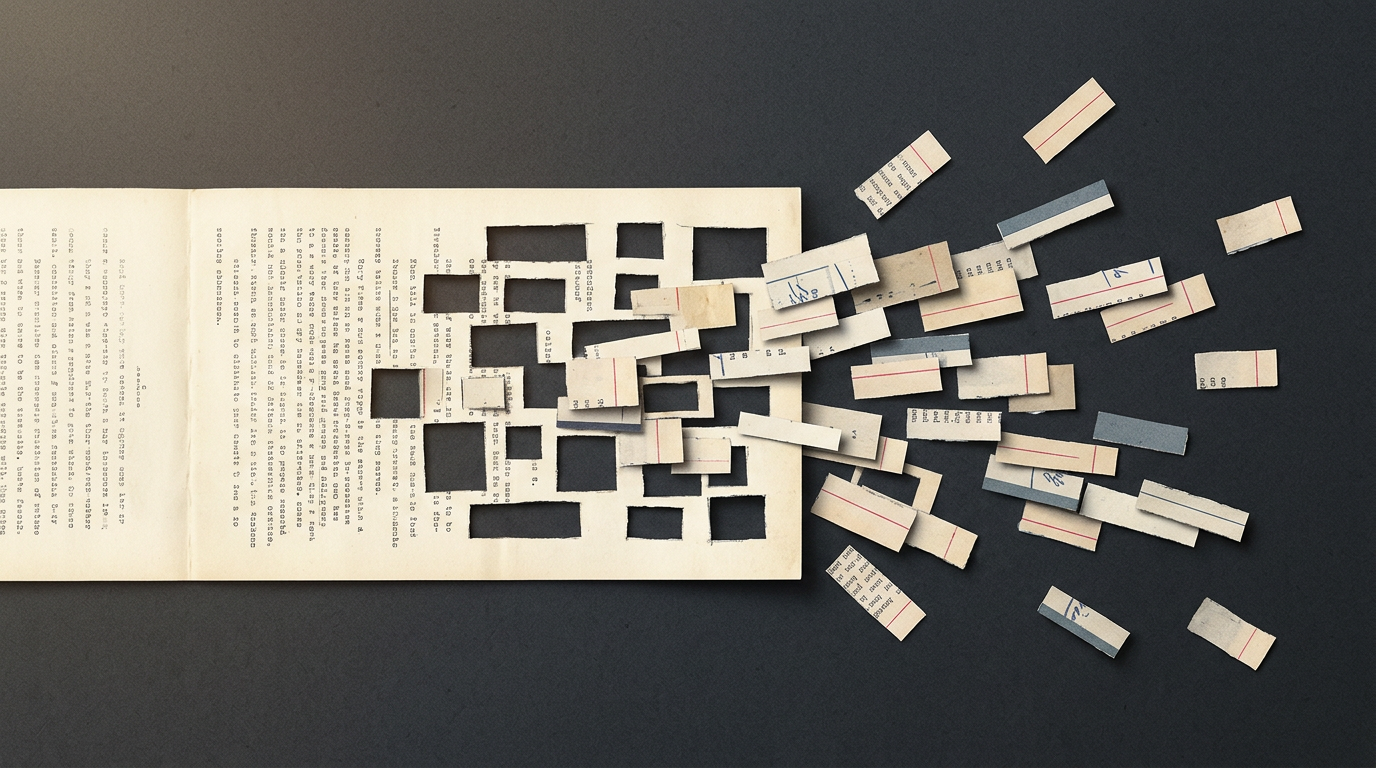

These numbers represent more than just a statistical increase in cybercrime; they signal a profound shift in the architecture of digital harm. According to the report, the prevalence of these scams across nearly every age demographic suggests that the vulnerability is not rooted in user digital literacy alone, but rather in the systematic design of the platforms themselves. The editorial thesis here is that the business model of social media, which prioritizes engagement and reach, is fundamentally at odds with the necessary friction required to verify authenticity and protect users from predatory behavior.

The Architecture of Exploitation

The infrastructure of modern social media is built on the frictionless dissemination of content, a feature that has proven to be a significant liability in the context of fraud. Platforms operate on algorithms designed to maximize time-on-site, often rewarding content that generates high interaction. Criminal actors have successfully weaponized these mechanisms, using sophisticated targeting tools to identify and isolate vulnerable individuals. When a platform’s core incentive is to keep users within a closed ecosystem, the introduction of security measures that slow down interaction or verify identity is often viewed as a threat to growth metrics.

Historically, the internet was viewed as a neutral medium, a "digital town square" where the responsibility for discernment rested primarily with the user. However, as these platforms have evolved into the primary gatekeepers of information and commerce, that historical framing has become increasingly obsolete. The scale of the $2.1 billion loss suggests that platforms are no longer merely passive conduits for information. They are active curators of the social and economic environment, yet they have consistently resisted the regulatory or operational oversight that would typically accompany such a position of influence.

Incentives and the Cost of Friction

The mechanism of these scams often relies on the exploitation of trust, a currency that social media platforms monetize but fail to safeguard. In the case of romance scams, where 60% of reported incidents began on social media, the platform provides the perfect stage for the cultivation of false intimacy. The algorithm facilitates the connection, the messaging tools provide the private channel for manipulation, and the lack of robust identity verification ensures that the perpetrator remains anonymous. For the platform, every interaction—even a fraudulent one—is a data point that feeds the engagement engine.

This creates a perverse incentive structure where the platform benefits from the traffic generated by scam activity. While platforms frequently point to their automated moderation systems, these tools are often reactive rather than proactive. They are designed to identify and remove content that violates policies, but they rarely address the underlying structural vulnerabilities that allow scammers to operate with impunity. When the cost of implementing stringent verification processes exceeds the cost of dealing with the occasional regulatory fine or public relations crisis, the rational corporate decision is to maintain the status quo.

The Regulatory and Social Tensions

For regulators, the challenge lies in balancing the need for consumer protection with the preservation of digital innovation. The current regulatory framework, which often treats platforms as neutral intermediaries under legacy laws, is increasingly ill-equipped to address the reality of modern digital fraud. If platforms are held strictly liable for the scams occurring on their networks, the result could be a fundamental transformation of the social media landscape, potentially moving toward more closed, verified, and gated systems. This would represent a departure from the open-web ethos that characterized the early internet.

Conversely, competitors and smaller startups may find themselves at a disadvantage if new regulations impose heavy compliance costs that only the largest incumbents can afford. The tension here is between the necessity of safety and the risk of entrenching platform monopolies. Consumers are caught in the middle, forced to navigate an increasingly hostile digital environment where the very tools meant to connect them are also those most likely to facilitate their financial loss. The societal cost is not just monetary; it is the slow degradation of the digital public sphere.

The Uncertain Outlook for Digital Integrity

What remains uncertain is whether the current wave of financial loss will reach a threshold that forces a shift in platform policy. As the FTC and other global regulators continue to scrutinize the role of social media in facilitating harm, the focus will likely move toward transparency mandates regarding moderation and the efficacy of user protection systems. The question of whether platforms can be incentivized to prioritize safety over engagement remains an open one, with little evidence to suggest a voluntary pivot in the near term.

Looking ahead, the evolution of generative AI will likely complicate this landscape further, making it easier for scammers to create convincing personas and fraudulent advertisements at scale. The ability to distinguish between legitimate commerce and predatory activity will become increasingly difficult for both users and automated moderation systems. As the digital economy continues to integrate more deeply into our social lives, the pressure to reconcile platform design with basic security standards will only intensify, leaving the industry at a critical inflection point.

As the data on these financial losses continues to accumulate, the conversation must inevitably move beyond individual responsibility and toward the systemic design choices that define our online existence. Whether these platforms will adapt to become safer environments or continue to rely on the current model of unchecked growth remains the fundamental question for the coming years.

With reporting from The Next Web

Source · The Next Web