In a significant indicator of the shifting landscape within software development, Google recently disclosed that 75% of its new code is now generated by artificial intelligence and subsequently validated by human engineers. This figure, highlighted by CEO Sundar Pichai in a recent update, marks a rapid acceleration from the 50% threshold reported just last autumn. According to reporting from Xataka, this transition is not merely an exercise in productivity gains but represents a fundamental reorganization of how one of the world's largest technology companies approaches the software development lifecycle.

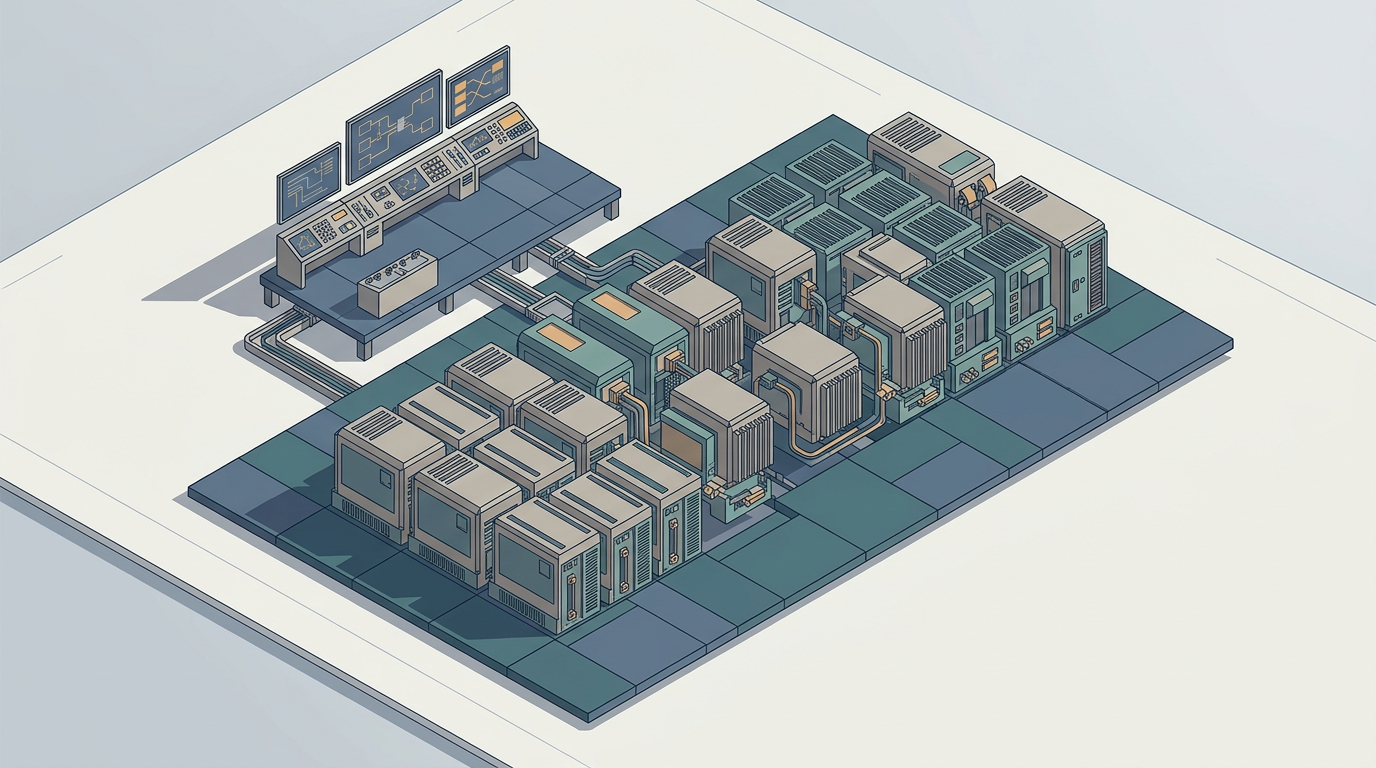

The implications of this shift extend far beyond simple automation. While the industry has long integrated AI into development environments through code completion tools and minor script generation, the current trajectory suggests a move toward what the company describes as "truly agentic" workflows. In these environments, human engineers are increasingly moving away from the manual writing of syntax and toward the orchestration of autonomous digital teams capable of executing complex migrations and system tasks at speeds significantly higher than traditional manual efforts. The central thesis is that the engineer’s value is being redefined, shifting from the granular act of writing lines of code to the higher-level responsibilities of system architecture, design, and complex problem-solving.

The Evolution of the Software Development Lifecycle

The history of software engineering has always been defined by the pursuit of higher levels of abstraction. From the earliest days of punch cards and assembly language to the rise of high-level languages like C, Java, and Python, the goal has consistently been to allow engineers to focus on logic rather than the mechanics of the machine. The current integration of AI into the coding process can be viewed as the next logical step in this long-term progression. By delegating the repetitive, boilerplate-heavy aspects of software creation to large language models, the industry is effectively raising the floor for what constitutes a "standard" development task.

However, this transition introduces a new set of structural tensions. When the majority of a codebase is generated by non-human entities, the nature of technical debt changes. Historically, technical debt was largely the result of human oversight, rushed timelines, or inadequate documentation. In an AI-assisted future, technical debt could potentially become more opaque, embedded in the probabilistic nature of the models themselves. If the underlying logic is generated by systems that operate on patterns rather than deterministic rules, identifying the root cause of a system failure becomes a more complex analytical challenge, requiring engineers to possess a deep understanding of both the code and the model that produced it.

The Mechanism of Human-in-the-Loop Oversight

At the heart of Google’s approach is the "human-in-the-loop" model, which remains the primary safeguard against the inherent unpredictability of generative AI. As noted in industry discussions, the distinction between code being "generated by AI" and code being "accepted without review" is critical. The current workflow relies on engineers acting as validators, auditors, and curators. This is not a passive role; it requires a high degree of technical competence to discern when a model has hallucinated a solution or introduced subtle, non-obvious security vulnerabilities into a production environment.

This mechanism of oversight creates a feedback loop that is vital for the maturation of these tools. By continuously reviewing and correcting AI-generated suggestions, human engineers are essentially training the next generation of models on their own high-quality output. This implies that as the system matures, the need for human intervention might decrease for routine tasks, but the stakes for the remaining human-led tasks—the architectural decisions—will likely increase. The efficiency gain cited by Google, where tasks were completed six times faster than previously possible, is a testament to the power of this hybrid model, yet it also highlights the risk of over-reliance on systems whose internal logic remains partially obscured.

Stakeholders and the Trust Gap

The divergence between corporate adoption and developer sentiment remains a significant point of friction. While major technology firms are aggressively scaling their use of AI, a substantial portion of the broader software development community continues to express skepticism. Surveys indicate that a significant majority of developers do not fully trust AI-generated code, and many incorporate these suggestions without rigorous verification. For regulators and enterprise customers, this gap between the velocity of adoption and the maturity of trust protocols is a looming concern. The risk of "black-box" code entering critical infrastructure is an issue that will likely demand new standards for auditability and transparency.

Furthermore, for the next generation of software engineers, the educational path is becoming increasingly ambiguous. If the entry-level tasks of writing basic functions are increasingly handled by agents, the traditional "apprentice" model of learning by doing becomes difficult to replicate. Companies will need to find new ways to provide junior engineers with the foundational experience required to eventually become the architects who oversee these AI systems. The burden of ensuring that these future leaders have the necessary depth of knowledge rests not just on educational institutions, but on the corporations that are leading this transition.

The Outlook for Autonomous Engineering

The question of how much autonomy should be granted to these systems remains the central challenge for the next few years. As AI models become more capable, the boundary between "assistant" and "agent" will continue to blur. We are likely to see a shift toward more complex, multi-agent systems where different AI models collaborate on larger projects, with humans acting as the ultimate oversight authority. Watching how these companies balance the drive for speed with the necessity of system stability will be crucial for understanding the trajectory of the software industry.

Ultimately, the transition toward AI-driven software production is not a binary event but a continuous process of recalibration. Whether this leads to a more robust and efficient software landscape or a more fragile one depends heavily on the rigor with which human oversight is maintained. As the lines between human creation and machine generation continue to fade, the industry must decide how to preserve the critical analytical skills that ensure software remains safe, reliable, and fundamentally understandable.

With reporting from Xataka

Source · Xataka