The Automated Academy: From Scholarly Slop to Synthetic Logic

The academic publishing system has long operated under a familiar set of pressures: produce papers, submit them through increasingly bureaucratic digital portals, survive peer review, repeat. For decades, the "publish or perish" imperative has shaped careers, distorted incentives, and generated a volume of scholarly output that no human could fully absorb. Now, Large Language Models are accelerating that dynamic to a point where the system's contradictions become difficult to ignore. AI-generated manuscripts are flooding submission queues, and in some cases, AI-generated referee reports are evaluating them — raising the possibility that entire cycles of academic production could proceed with minimal human involvement.

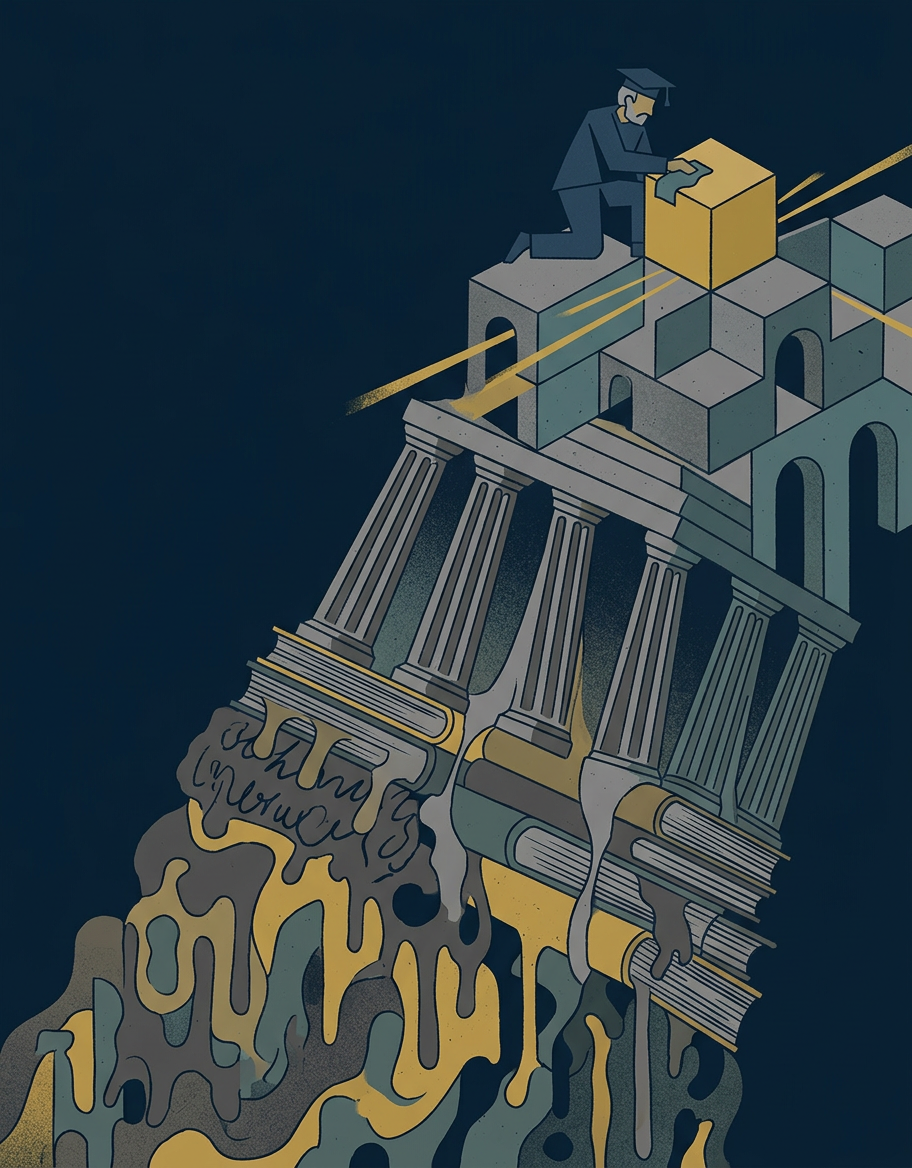

The observation comes from a particular vantage point: that of a decision theorist approaching retirement, freed from the treadmill and thus able to examine it with some detachment. From that position, the machinery of modern scholarship looks less like a knowledge-producing enterprise and more like a self-perpetuating loop — one that AI threatens to accelerate past the point of meaningful quality control. The term gaining traction for the lowest tier of this output is "slop": synthetic content that mimics the form of scholarship without contributing substance. The question is whether that label captures the full picture, or whether something more interesting is happening at the frontier of AI-assisted reasoning.

The Ecosystem Problem: When Both Author and Referee Are Machines

The challenge posed by AI in academia is not simply one of authorship. Ghost-written or heavily assisted papers have existed for years; the novelty lies in the systemic scope of automation. When the same class of tools generates the manuscript, drafts the cover letter, and potentially evaluates the submission on the reviewer's side, the feedback loop that is supposed to ensure rigor begins to collapse. Peer review functions — at least in theory — as a mechanism for adversarial scrutiny: one mind testing the claims of another. If both sides of that exchange are synthetic, the process risks becoming a closed circuit, producing outputs that satisfy formal criteria while evading genuine intellectual challenge.

This is not a hypothetical concern. Editors at multiple journals have reported a noticeable uptick in submissions bearing the hallmarks of AI generation: fluent but generic prose, superficially correct but analytically shallow arguments, and occasional telltale artifacts such as phrases like "as a large language model." The peer-review system, already strained by volume and the difficulty of recruiting qualified reviewers, is poorly equipped to absorb a further surge of synthetic material. The structural incentives remain unchanged — institutions still reward publication volume — but the cost of producing a passable manuscript has dropped precipitously.

Ravens, Green Apples, and the Boundaries of Synthetic Reasoning

Whether AI can move beyond slop toward genuine analytical contribution is a separate and more interesting question. One test case involves Hempel's paradox of confirmation, a classic problem in the philosophy of science. The paradox arises from the logical equivalence between "all ravens are black" and its contrapositive, "all non-black things are non-ravens." Observing a green apple — a non-black non-raven — technically confirms the hypothesis that all ravens are black, a result that strikes most people as absurd but is logically sound under standard confirmation theory.

Engaging AI systems such as OpenAI's Deep Research and the French model Mistral with this problem reportedly yields results that go beyond simple pattern matching. The systems appear capable of navigating the paradox's structure, engaging with the interplay between formal logic and intuitive reasoning that makes it a staple of decision theory and epistemology. This does not settle the deeper question of whether such engagement constitutes understanding or sophisticated token arrangement — a distinction that philosophers of mind have debated long before LLMs existed. But it does suggest that the ceiling for AI-assisted reasoning may be higher than the flood of academic slop would indicate.

The tension, then, is not simply between human and machine authorship. It is between two possible futures for the academy: one in which AI accelerates the worst tendencies of publish-or-perish culture, burying genuine insight under an avalanche of polished but empty output, and another in which these tools become capable collaborators in domains — like bounded awareness and formal epistemology — where the reasoning is rigorous enough to resist mere mimicry. A retiring scholar can afford to watch that tension play out from a distance. The institutions that must absorb its consequences cannot.

With reporting from Crooked Timber.

Source · Crooked Timber