The capital requirements of the generative AI era are reaching a scale that blurs the line between venture investment and industrial infrastructure. Amazon has announced an additional $5 billion investment in Anthropic, the San Francisco-based developer of the Claude AI models, bringing its total commitment to the startup to $13 billion. The deal is more than a simple cash infusion; it is a strategic tethering that mandates Anthropic use Amazon’s proprietary Trainium and Inferentia chips to train and deploy its future models.

This deepening partnership arrives at a moment of operational friction for Anthropic. While Claude has garnered a reputation for nuanced reasoning and "constitutional" safety, its success has outpaced its hardware capacity. Recent months have seen frequent performance lags and service outages as the startup struggled to manage a surge in paid subscribers. By securing up to five gigawatts of compute power through Amazon’s data centers, Anthropic is effectively buying the stability required to compete with OpenAI and Google.

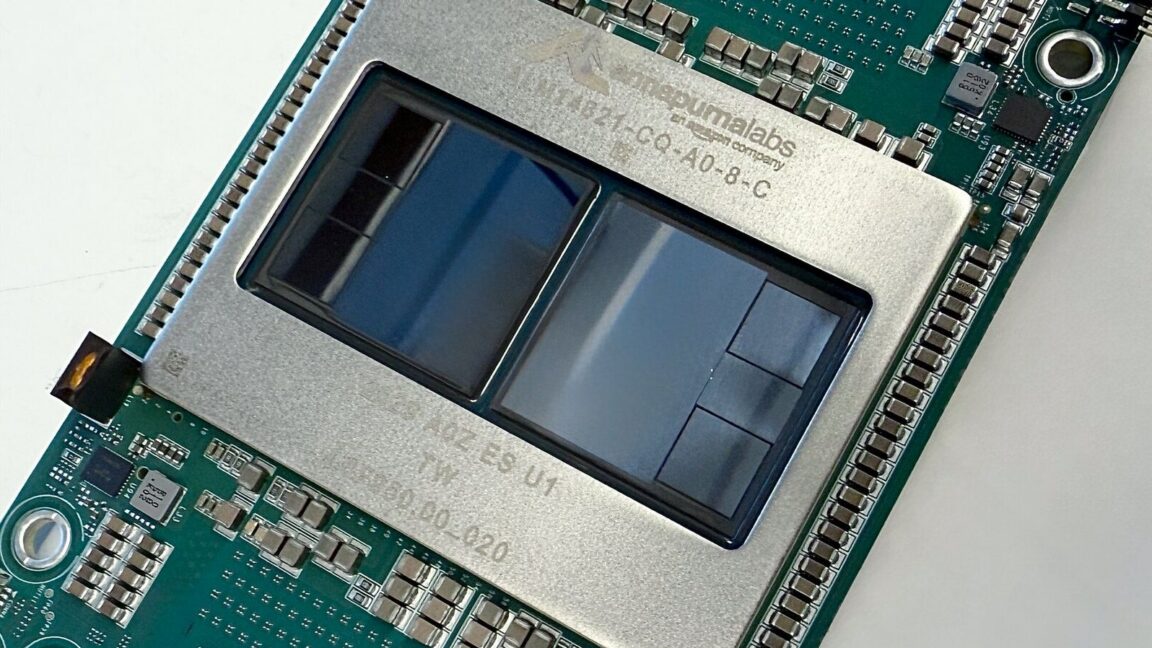

For Amazon, the deal provides a high-profile validation of its custom silicon. By moving Anthropic away from a total reliance on industry-standard Nvidia chips, Amazon is positioning its own hardware as a viable alternative for the most demanding workloads in the world. The arrangement includes a provision for an additional $20 billion in future funding, contingent on commercial milestones—a figure that underscores the staggering cost of staying relevant in the high-stakes race for artificial general intelligence.

With reporting from Ars Technica.

Source · Ars Technica