In February 2025, OpenAI co-founder Andrej Karpathy articulated a shift in the nature of technical labor with a single term: "vibe coding." The premise is deceptively simple: instead of wrestling with syntax and logic, a user describes a desired outcome to an AI model—a website, a tool, a game—and the machine translates that "vibe" into functional code. It is the ultimate democratization of software development, turning imagination into the primary barrier to entry.

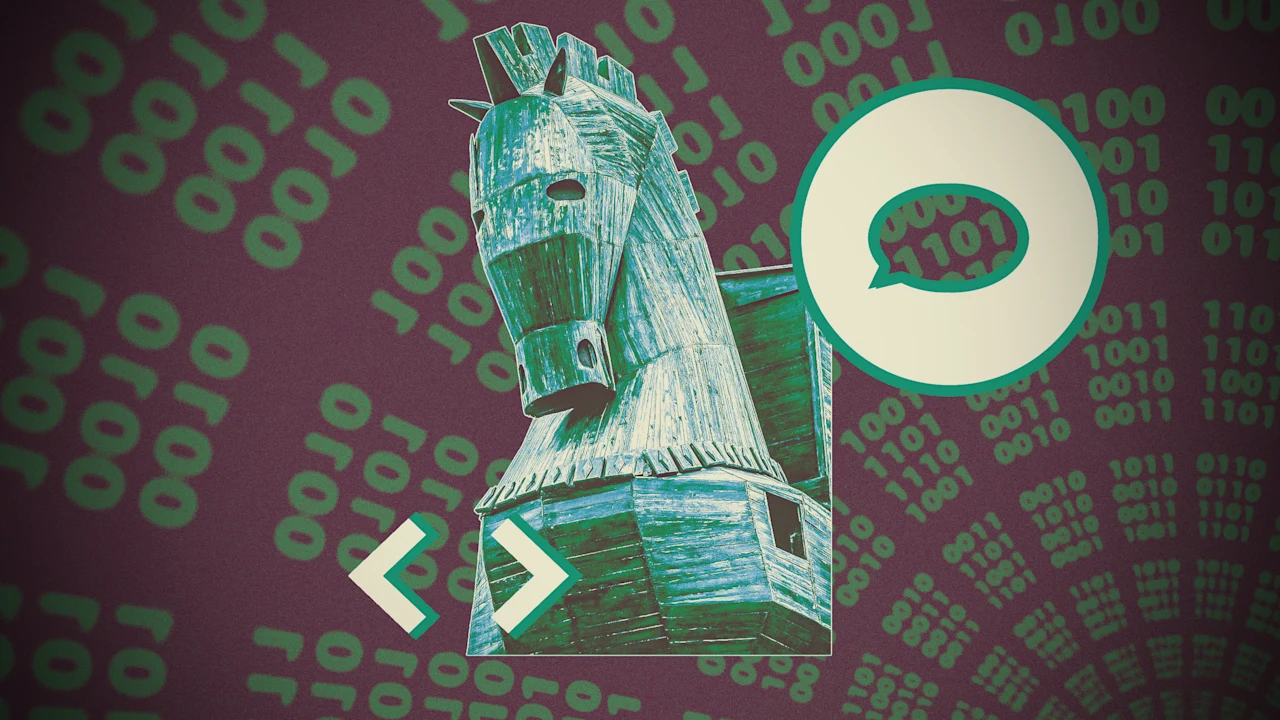

While this represents a leap in productivity, it also introduces a profound architectural opacity. When code is "vibed" into existence, the user often lacks the technical literacy to audit what the AI has actually produced. The software appears to work on the surface, but its internal logic remains a black box. This creates a bridge for unvetted scripts to cross directly into a company’s cybersecurity perimeter, often bypassing the traditional oversight of IT departments.

The fundamental hazard lies in the question of provenance. AI models are pattern-matching engines; they synthesize code from vast datasets without regard for the intent or security posture of the original source. An employee’s clever prompt might yield a solution derived from a high-level academic project, or it might inadvertently replicate a pattern designed by a malicious actor. Because the AI is "blindingly oblivious" to the origins of its output, the responsibility for safety falls entirely on a user who may not know how to identify a vulnerability.

With reporting from Fast Company.

Source · Fast Company