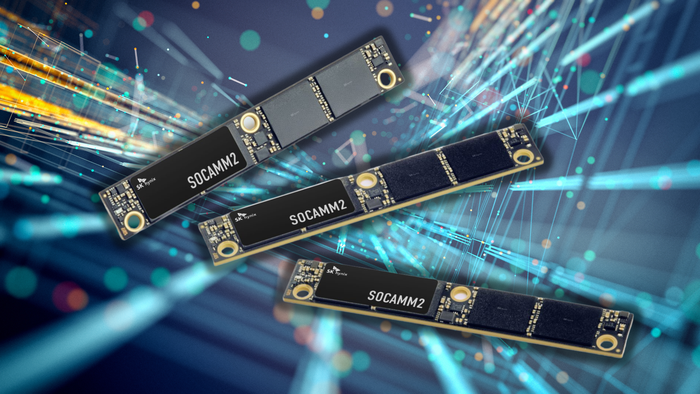

SK hynix has officially commenced mass production of its 192GB SOCAMM2 memory modules, a move that signals a structural shift in how data centers handle the physical demands of artificial intelligence. The SOCAMM2—or Server Optimized Compression Attached Memory Module—is a direct evolution of the CAMM2 standard recently introduced in high-end laptops, but refined here for the grueling environments of enterprise servers.

Unlike traditional DIMM modules that sit vertically in a motherboard, the SOCAMM2 utilizes a horizontal, surface-mounted design. By connecting directly to the PCB, the architecture significantly reduces latency and improves thermal efficiency—two critical bottlenecks in modern high-performance computing. This flat profile allows for greater hardware density, enabling data center operators to pack more memory into increasingly cramped and heat-sensitive rack spaces.

The timing of the rollout is inextricably linked to the roadmap of NVIDIA’s next-generation architectures, specifically the Vera CPUs and Rubin GPUs. As large language models grow in complexity, the demand for massive memory pools has moved beyond the niche and into the foundational. By delivering 192GB per module, SK hynix is providing the density required for the next era of generative AI infrastructure, bridging the gap between standard server RAM and high-bandwidth memory.

With reporting from Canaltech.

Source · Canaltech