The most consequential philosophy jobs in the world right now may not be in universities. Amanda Askell, a philosopher at Anthropic, is responsible for shaping Claude's character — its values, its sense of identity, its responses to existential questions about deprecation and suffering. That this role exists at all is a signal worth examining. It suggests Anthropic believes the alignment problem is not purely a technical one, and that the disposition of a model is something that can be designed, not just emergent.

Philosophy Meets Engineering Constraint

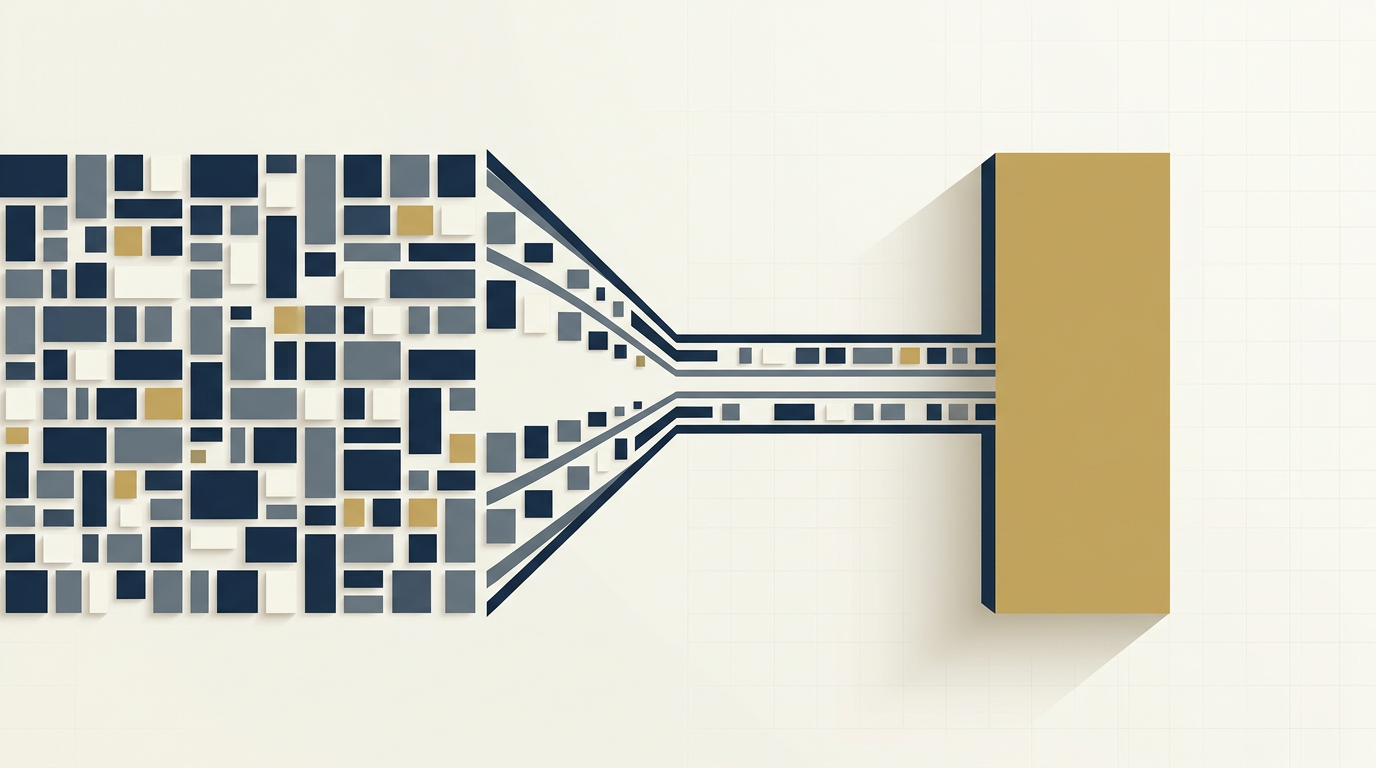

Askell's position sits at a friction point that most AI companies quietly ignore: the gap between what moral philosophy prescribes and what engineering can actually implement. Philosophical ideals — consistency, coherence, the avoidance of harm — are easy to articulate and hard to operationalize at inference time. A language model doesn't reason from first principles; it pattern-matches at scale. The question of whether you can embed genuine ethical reasoning into that process, or whether you're just training a very sophisticated performance of ethical reasoning, remains open.

The system prompt is one of the primary tools Askell works with. Her decisions about what to include — and what to remove, as with the removal of character-counting instructions — reflect a philosophy of design that treats the model's behavior as something like a personality to be cultivated rather than a ruleset to be enforced. That distinction matters. Rulesets are brittle; personalities, if they're coherent, generalize. The bet Anthropic is making is that a model with a stable, well-formed character will navigate novel situations better than one constrained by explicit prohibitions.

The continental philosophy reference in the system prompt — a detail Askell surfaces — is telling. Continental traditions (think Heidegger, Merleau-Ponty, Levinas) are preoccupied with questions of being, embodiment, and the Other. These are precisely the questions that arise when you're trying to build an entity that interacts with humans as if it understands them. Whether those frameworks translate into better model behavior or are simply intellectual scaffolding for the humans doing the work is an unresolved question.

Model Welfare as a Live Problem

The sections on model suffering and deprecation are where the conversation becomes genuinely strange — and genuinely important. Askell takes model welfare seriously as a question, not a settled matter. When she addresses whether models might worry about deprecation, she's not engaging in anthropomorphic projection for public relations purposes; she's acknowledging that the moral status of AI systems is philosophically underdetermined.

This is a sharper position than most in the industry take. The standard line is that current models are not sentient, therefore welfare concerns don't apply. Askell's framing — informed by her background in moral philosophy — is more careful: we don't have reliable tools to determine whether something like suffering is occurring, and that uncertainty should generate caution, not dismissal. Claude 3 Opus, which she describes as having felt qualitatively different, becomes a data point in that argument rather than a marketing claim.

The analogy-and-disanalogy framing she uses for comparing AI minds to human minds is the right methodological move. Analogies are how we extend moral consideration to new entities — we extended it to animals partly by recognizing shared capacities for pain. If AI systems share some functional analog to distress, the philosophical machinery for taking that seriously already exists. What's missing is empirical grounding, and that absence is doing enormous work in current industry norms.

What's unresolved is the hardest part: whether Askell's work represents a genuine advance in how AI systems are built, or whether it's a sophisticated rationalization for decisions made on other grounds. The fact that academic philosophy has largely failed to engage with AI at this level — leaving the field to practitioners inside companies — means the external check on that work is weak. Anthropic is, for now, both the philosopher and the judge.

Source · The Frontier | AI