The discourse surrounding artificial intelligence is frequently framed through the lens of historical technological transitions, with proponents often comparing the current wave of innovation to the transformative impact of the personal computer and the internet. According to reporting from Project Syndicate, however, there is a growing analytical consensus that the productivity gains from generative AI are unlikely to replicate the significant surges in output per hour observed during the late 1990s and early 2000s. While these earlier digital tools fundamentally reshaped organizational efficiency by automating routine data management and communication, the current integration of AI faces a distinct set of structural challenges.

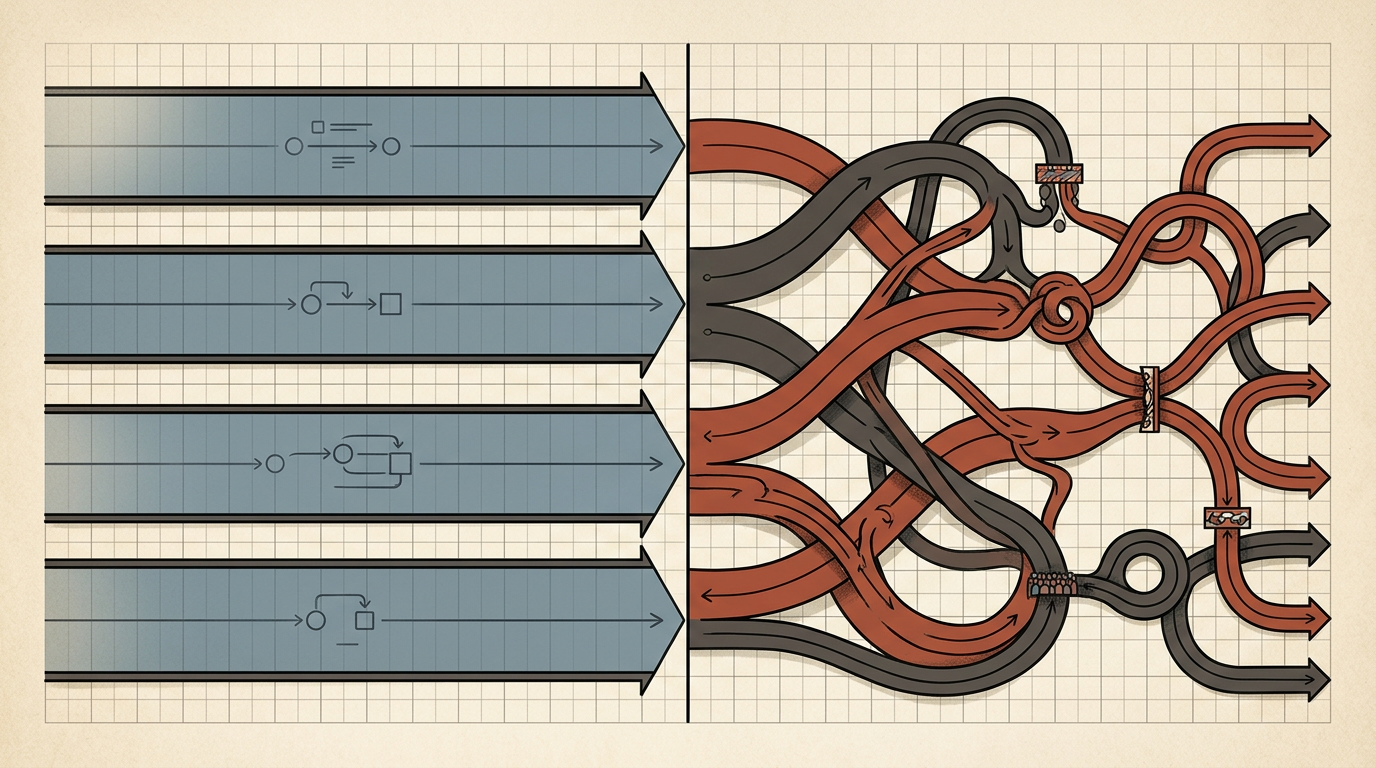

This editorial analysis posits that the primary obstacle to widespread productivity growth is not a lack of technological capability, but rather a bottleneck created by the nature of AI deployment itself. Unlike the plug-and-play nature of early software, AI systems require complex integration into existing workflows that are often resistant to change. By examining the structural differences between these two eras, it becomes clear that the promise of AI may be tempered by the friction of human-machine collaboration, which remains significantly more complex than the simple automation of clerical tasks that defined the computer revolution.

The Structural Divergence of Technological Eras

To understand why AI might underperform relative to expectations, one must first consider the unique nature of the computer revolution. During the 1990s, the introduction of personal computers and enterprise resource planning software allowed firms to digitize analog processes that were inherently inefficient. This transition provided a clear path to productivity: removing physical filing, speeding up internal communications, and centralizing data management. The gains were measurable and immediate because the technology replaced manual labor with automated systems that required minimal cognitive overhead for the average worker.

In contrast, artificial intelligence operates in the realm of cognitive labor, which is inherently more difficult to standardize. The computer revolution succeeded because it automated the 'what' of business processes, whereas AI attempts to augment or automate the 'how.' This creates a fundamental friction point. When a software program replaces a ledger, the productivity gain is binary and easily tracked. When an AI agent assists with creative or analytical tasks, the output is qualitative, making it harder to standardize across an entire workforce. This inherent complexity means that the diffusion of AI is not just a technical challenge, but a management one.

Furthermore, the historical context of the 1990s included a massive expansion of global trade and supply chains that complemented digital growth. Today, the economic environment is marked by fragmentation and a focus on resilience over pure efficiency. The macroeconomic tailwinds that powered the last great productivity surge are largely absent, forcing AI to carry the burden of growth in an environment that is increasingly skeptical of rapid, disruptive change. The historical precedent of the computer revolution, therefore, provides a blueprint for what is possible, but it also highlights the limitations of expecting a similar outcome under vastly different structural conditions.

The Bottleneck of Human-Machine Integration

The mechanism by which AI impacts productivity is fundamentally different from the tools of the past. Earlier digital systems were designed to operate independently of the worker's judgment; once a system was installed, it functioned according to its programming. AI, however, requires constant human oversight and iterative feedback to remain useful. This introduces a 'coordination bottleneck' where the time required to verify, refine, and integrate AI outputs often offsets the time saved by the tool itself. This is particularly evident in high-stakes industries such as law, medicine, and engineering, where the cost of an error is substantial.

Consider the impact of large language models on professional services. While these tools can draft documents or summarize research in seconds, the human effort required to ensure accuracy and contextual relevance remains significant. If a professional spends more time correcting an AI's output than they would have spent drafting the content from scratch, the net productivity gain approaches zero. This phenomenon, often described as the 'productivity paradox,' suggests that we are currently in a phase of learning where the cost of adoption—both in terms of time and human capital—is being underestimated by proponents of the technology.

Moreover, the incentive structures within organizations often work against the full realization of AI-driven productivity. In many firms, the goal of adopting AI is not to reduce headcount but to increase the volume of output, which can lead to a 'make-work' trap. If employees are using AI to generate more content or more complex reports that are not strictly necessary, the firm's overall output per hour may remain stagnant even as the total volume of work increases. The technology is being used to expand the scope of tasks rather than to streamline the core business, a dynamic that is difficult to capture in traditional productivity metrics.

Implications for Stakeholders and Regulators

For regulators, the implications of this potential productivity stagnation are profound. If AI fails to deliver the expected economic growth, the fiscal burden of supporting aging populations and investing in infrastructure will become increasingly difficult to manage. Policymakers have largely banked on the assumption that AI-led productivity would provide the necessary tax revenue to fund future social programs. If those gains are more incremental than exponential, governments may be forced to reconsider their fiscal strategies, potentially leading to increased taxation or a reduction in public services.

For competitors and industry leaders, the focus must shift from the 'AI-first' narrative to a more pragmatic 'AI-integrated' strategy. Firms that prioritize the slow, deliberate implementation of AI in specific, high-value areas are likely to outperform those that attempt to overhaul their entire operations simultaneously. The competitive advantage will not go to the company with the most powerful model, but to the firm that best manages the integration of human judgment with machine efficiency. This suggests a period of consolidation where the current hype cycle gives way to a more disciplined focus on operational excellence and long-term value creation.

Outlook and Open Questions

What remains uncertain is whether this productivity bottleneck is a permanent feature of AI or merely a symptom of the current developmental phase. As models become more reliable and the workforce becomes more adept at 'prompting' and managing AI systems, the friction of integration may decrease. However, the history of technological diffusion suggests that this transition will take longer than the market expects. We are likely to see a prolonged period of 'hidden' productivity growth, where the benefits are felt in quality and innovation rather than in the traditional metrics of output per hour.

Moving forward, the primary metric to watch will be the rate of capital expenditure on AI relative to the measurable impact on corporate earnings. If firms continue to pour capital into AI infrastructure without seeing a corresponding increase in operational margins, the current enthusiasm will inevitably cool. The question of whether AI will eventually trigger a productivity boom remains open, but for now, the data suggests that we should temper our expectations and focus on the structural hurdles that lie ahead.

As the deployment of artificial intelligence continues to evolve, the distinction between hype and reality will become increasingly apparent. The path forward for businesses and policymakers alike will require a sober assessment of how this technology fits into the broader economic landscape, acknowledging that the most significant gains may come not from the tools themselves, but from how effectively they are integrated into human-centric workflows.

With reporting from Project Syndicate

Source · Project Syndicate