On Friday, Chinese AI firm DeepSeek released a preview of V4, its new flagship model, in two versions — V4-Pro, built for coding and complex agent tasks, and V4-Flash, a lighter variant designed for speed and cost efficiency. Both versions can process up to one million tokens of context, a significant leap from the company's previous generation, according to MIT Technology Review reporting.

The release is DeepSeek's most substantial since R1, the reasoning model that caught the global AI industry off guard in January 2025 with its strong performance on limited compute. But where R1's story was about training efficiency, V4's contribution may be more structurally significant: it introduces an architectural rethinking of how AI models handle long text, compressing older information while preserving nearby detail, and in doing so dramatically reduces the cost of long-context inference. The implications extend well beyond a single model release — they touch the economics of building AI-powered applications at scale.

Rethinking Attention, Not Just Expanding It

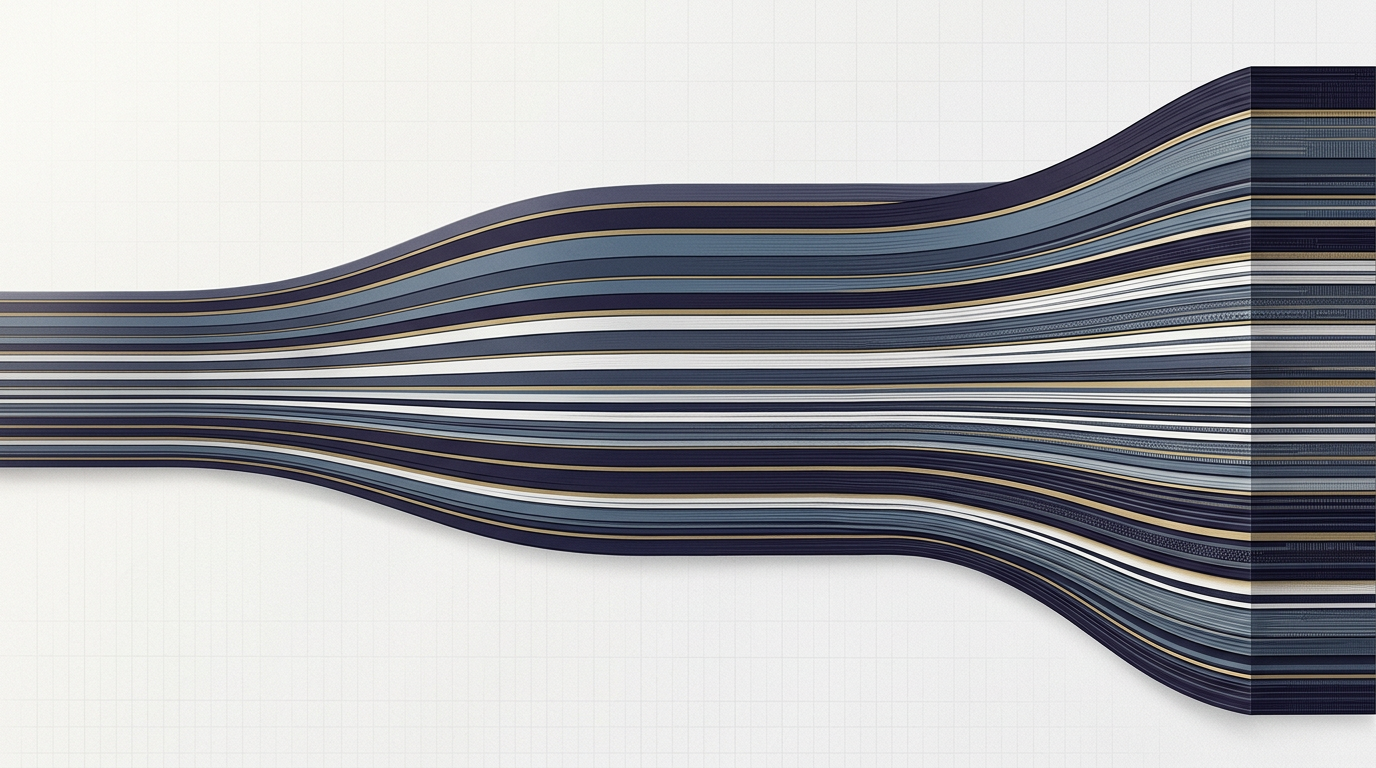

The central technical innovation in V4 lies in its attention mechanism — the component of a language model responsible for understanding how each part of a prompt relates to every other part. As input length grows, the computational cost of this process scales aggressively, making long-context windows one of the most expensive features to support. Most frontier models have addressed this by simply throwing more hardware at the problem. DeepSeek took a different path.

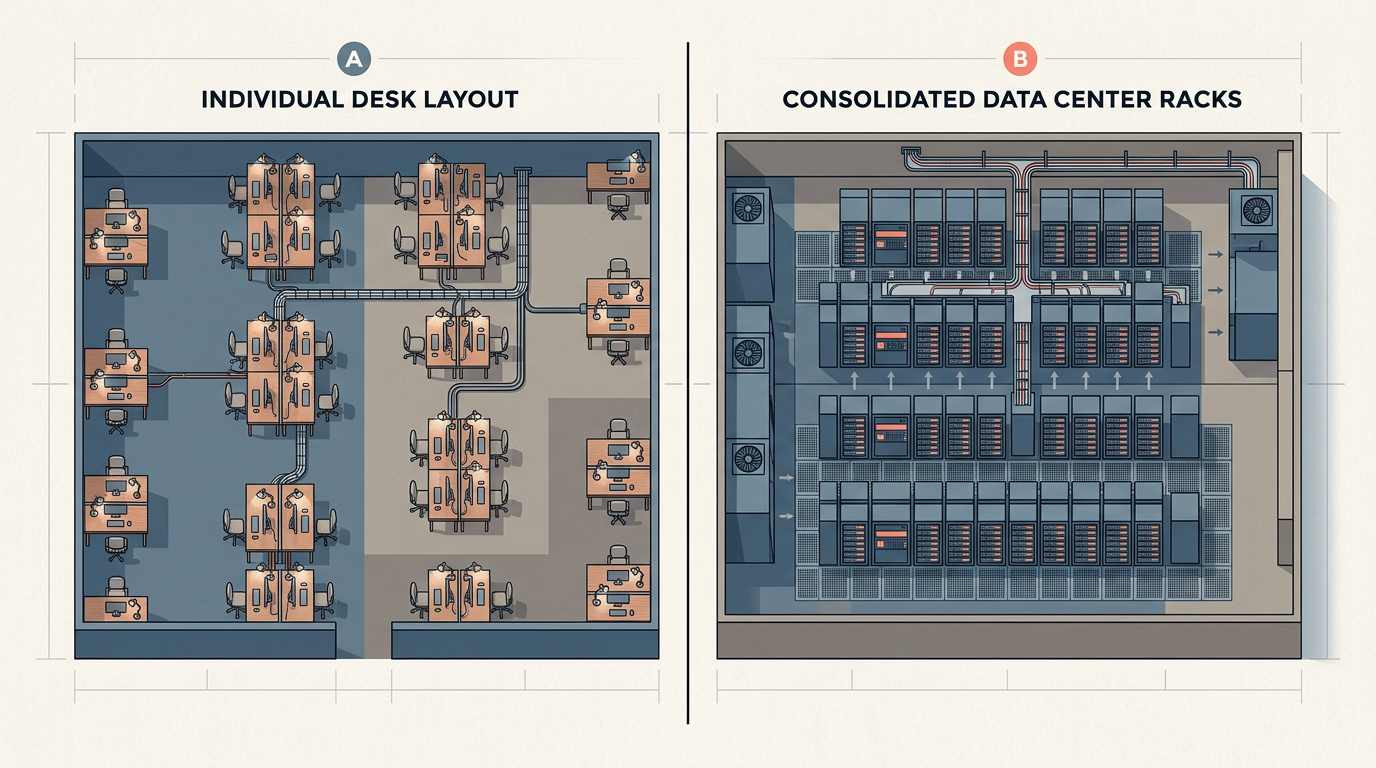

V4's architecture makes the model more selective about what it attends to. Rather than treating all prior text as equally relevant, it compresses older context and concentrates processing power on the portions most likely to matter at any given step, while retaining nearby text at full resolution. According to DeepSeek's technical report, this reduces V4-Pro's compute requirements in a one-million-token context to just 27% of what its predecessor V3.2 needed, while cutting memory usage to 10%. V4-Flash goes further still: 10% of compute, 7% of memory. These are not marginal gains — they represent a fundamentally different cost curve for long-context inference, one that could make applications like codebase-wide AI assistants or long-document research agents economically viable in ways they previously were not.

Open Source, Closed Gap

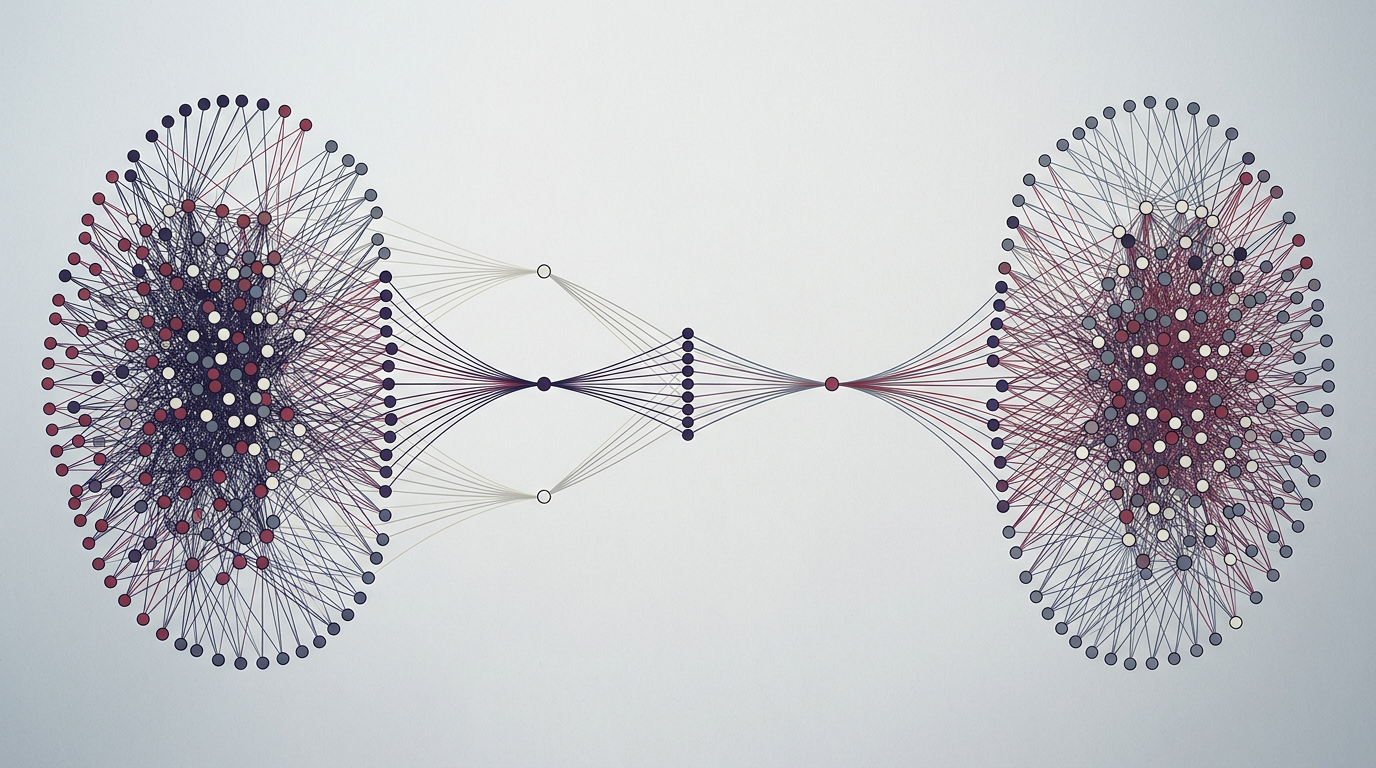

DeepSeek's decision to release V4 as open source amplifies the architectural breakthrough. According to benchmark results shared by the company, V4-Pro matches the performance of leading closed-source models from Anthropic, OpenAI, and Google, while exceeding other open-source alternatives from Alibaba and Z.ai on coding, math, and STEM tasks. At $1.74 per million input tokens for V4-Pro and roughly $0.14 for V4-Flash, the pricing undercuts major competitors by a wide margin.

For the broader AI ecosystem, this creates a meaningful tension. If an open-source model can deliver frontier-level performance at a fraction of the cost — and do so with dramatically lower memory requirements — the value proposition of closed-source API access becomes harder to justify for many use cases. Developers building on V4 gain not just price advantages but architectural flexibility: the ability to run and modify models on their own infrastructure, tuned to their own needs. DeepSeek's report noted that in an internal survey of 85 experienced developers, more than 90% ranked V4-Pro among their top choices for coding tasks. Whether that preference holds at scale remains to be seen, but the signal is clear.

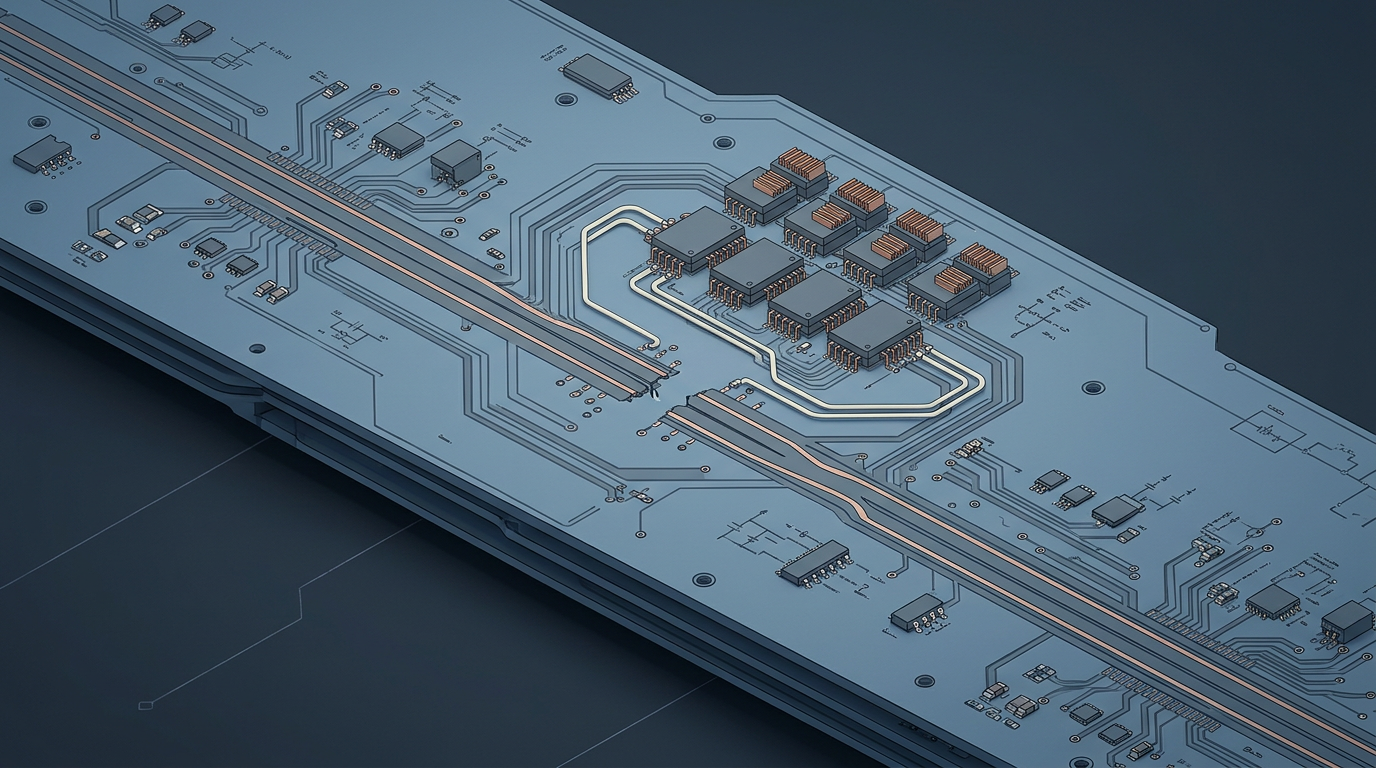

The release also carries geopolitical weight. V4 is DeepSeek's first model optimized for domestic Chinese chips, including Huawei's Ascend series — a development that tests whether China's push for semiconductor self-reliance can extend into frontier AI. DeepSeek does not appear to have fully moved beyond Nvidia; its technical report indicates Chinese chips are being used primarily for inference, not necessarily for the full training pipeline. But the direction of travel is unmistakable, and V4's memory-efficient architecture may prove particularly well-suited to hardware that lacks Nvidia's raw power but can handle lighter inference workloads.

As the economics of long-context AI shift and the open-source frontier continues to narrow the gap with proprietary models, the question is no longer whether efficient architectures can compete — but how quickly the rest of the industry adapts to a world where they do.

With reporting from MIT Technology Review

Source · MIT Technology Review