The landscape of corporate artificial intelligence is currently characterized by a profound disconnect between the ubiquity of tools like ChatGPT and their actual impact on organizational productivity. According to reporting from Inc. Magazine, a collaborative study between researchers at Harvard and OpenAI has surfaced data suggesting that while AI adoption is high at the individual level, it has yet to translate into the systemic, large-scale shifts that many executives originally anticipated. The findings indicate that most employees are utilizing these platforms for discrete, ad-hoc tasks rather than integrating them into core business workflows.

This discrepancy creates a significant challenge for organizational leaders who have invested heavily in AI infrastructure under the assumption of immediate, transformative ROI. The thesis emerging from this data is that the current phase of AI adoption is defined by 'shallow integration,' where the technology serves as a digital assistant for personal efficiency rather than a catalyst for structural business change. As organizations navigate this transition, they must confront the reality that scaling AI requires more than just access to large language models; it necessitates a fundamental reassessment of how work is structured and how value is defined within the corporate hierarchy.

The Architecture of Experimental Usage

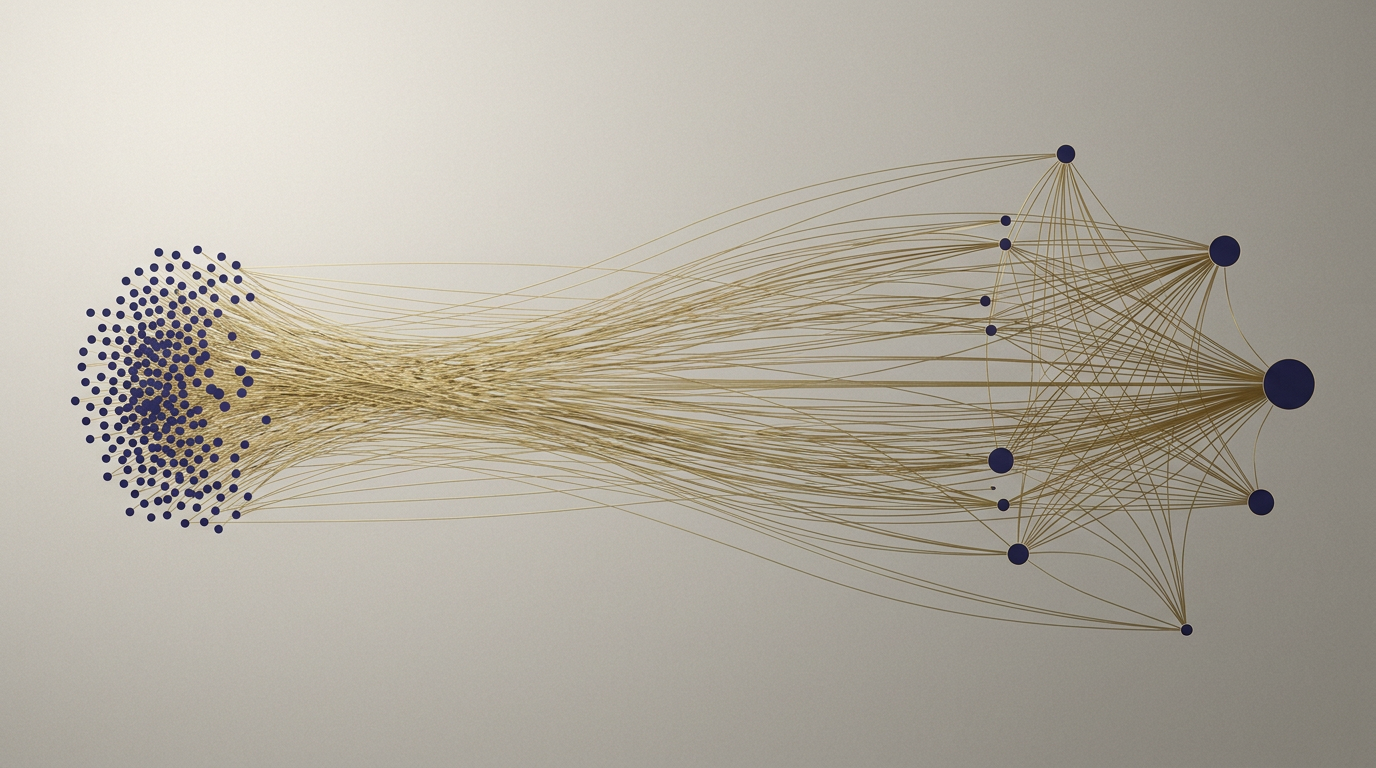

The primary hurdle to scaling AI lies in the nature of the experimentation currently taking place across the enterprise. When employees are left to their own devices, they naturally gravitate toward low-friction, high-visibility tasks—such as drafting emails, summarizing meeting notes, or refining brief pieces of code. While these applications provide tangible benefits to individual contributors, they do not constitute a strategic adoption of AI. This creates a state of 'experimental inertia,' where the sheer volume of low-level usage provides a false sense of progress, masking a lack of deep, process-oriented implementation.

Historically, this pattern mirrors the early days of personal computing or the initial rollout of cloud services, where the technology was first adopted by 'shadow IT' or individual power users before being standardized by the enterprise. However, the nature of generative AI presents a unique structural challenge because it interacts directly with the cognitive processes of the workforce. Unlike cloud storage, which is a passive utility, AI acts as an active participant in decision-making, which introduces risks related to accuracy, bias, and intellectual property that individual experimentation rarely addresses.

Furthermore, the lack of centralized guidance often leads to a fragmented ecosystem of AI tools within a single organization. When different departments adopt different models or workflows without a cohesive strategy, the result is a siloed environment that prevents the cross-pollination of data and insights. This structural fragmentation is a hallmark of an organization that is still in the 'novelty' phase, treating AI as a suite of external applications rather than an integrated layer of the corporate technology stack.

Mechanisms of Institutional Friction

To understand why this gap persists, one must examine the incentive structures within the modern corporation. Most management systems are designed to reward predictable, repeatable outcomes, whereas the effective deployment of generative AI often requires a degree of trial and error that can be uncomfortable for risk-averse leadership. When the potential for error—or 'hallucination'—is high, the natural organizational response is to restrict usage to non-critical tasks. This creates a self-reinforcing cycle where AI is kept on the periphery of the business, preventing it from ever being stress-tested in mission-critical environments.

Moreover, the barrier to scaling is not merely technical but cultural. The transition from using AI as a 'chatbot' to using it as a 'system' requires a shift in the definition of expertise. Employees who were previously valued for their ability to perform specific, manual knowledge work must now pivot to become orchestrators of AI-driven processes. This shift requires a level of transparency and trust that many organizations have yet to cultivate. Without clear governance frameworks, employees may be hesitant to integrate AI into their core responsibilities, fearing that the technology could either replace their role or expose their work to unforeseen vulnerabilities.

This mechanism of caution is compounded by the rapid pace of model iteration. Organizations that attempt to build rigid, long-term AI strategies often find their plans obsolete within months, as new capabilities emerge from providers like OpenAI. This creates a 'wait-and-see' mentality among senior leadership, who are wary of committing to a specific technical architecture when the underlying technology is still in such a state of flux. Consequently, the organization remains in a state of perpetual experimentation, never fully committing to the deep integration required to see transformative results.

Implications for Stakeholders and Regulators

For regulators, the current state of AI adoption presents a complex challenge. As long as usage remains experimental and decentralized, it is difficult to establish clear standards for safety, transparency, and accountability. Regulators are increasingly looking at how these tools interact with sensitive corporate data, yet they are often chasing a moving target. If organizations do not move toward a more structured, centralized approach to AI, the risk of data leakage and systemic failure increases, potentially inviting heavier-handed regulatory intervention that could stifle innovation across the board.

For competitors, the gap between experimentation and scaling represents an opportunity for differentiation. Companies that can successfully navigate the 'valley of death'—the period between initial pilot projects and full-scale deployment—will likely gain a significant productivity advantage. This requires a shift in focus from the novelty of the models themselves to the robustness of the workflows they support. Those who treat AI as a core competency, rather than a peripheral tool, will be better positioned to weather the volatility of the current market and establish a sustainable competitive moat.

The Outlook for Enterprise Intelligence

The uncertainty surrounding the future of AI adoption remains rooted in the question of whether organizations can evolve their internal cultures fast enough to match the pace of technological advancement. It is unclear if the current model of 'bottom-up' experimentation will eventually coalesce into a coherent strategy, or if it will simply lead to a plateau of marginal productivity gains. The next phase of development will likely see a greater emphasis on 'agentic' workflows, where AI systems perform multi-step tasks with minimal human intervention, which will force a much more rigorous debate about organizational governance.

As the industry matures, the focus will likely shift from the sheer volume of AI usage to the quality and integration of those applications. Leaders must determine which processes are ripe for automation and which require the unique, nuanced judgment of human experts. The tension between agility and control will continue to define the discourse for the foreseeable future, as organizations seek to balance the benefits of rapid innovation with the necessity of operational stability and risk management.

Ultimately, the transition from experimental novelty to systemic integration is not a destination but a continuous process of calibration. Organizations that succeed will be those that recognize that AI is not merely a tool to be adopted, but a fundamental shift in the way knowledge work is organized and executed. As the technology continues to evolve, the challenge for leadership will be to foster an environment where experimentation is encouraged, but ultimately subordinate to a clear, value-driven strategic vision.

With reporting from Inc. Magazine

Source · Inc. Magazine