The integration of artificial intelligence into clinical environments has transitioned from a theoretical promise to a ubiquitous reality. Across medical centers globally, clinicians are increasingly utilizing AI tools to streamline administrative burdens, such as transcribing patient consultations, and to assist in complex diagnostic tasks like interpreting medical imaging and analyzing longitudinal patient records. According to reporting from MIT Technology Review, while early studies suggest these tools demonstrate high levels of technical precision, a fundamental question remains unanswered: does this technological infusion actually translate into improved health outcomes for patients?

This discrepancy between technical capability and clinical effectiveness has prompted experts to urge for a more rigorous evaluation of AI deployment. Scientists like Jenna Wiens of the University of Michigan and Anna Goldenberg of the University of Toronto have pointed out that the current pace of adoption often outstrips the pace of clinical validation. The editorial thesis here is clear: the healthcare sector is currently prioritizing the promise of efficiency over the empirical necessity of proving that these tools enhance the quality of care, creating a precarious environment where clinical decision-making is increasingly mediated by algorithms whose long-term impacts remain largely unstudied.

The Illusion of Technical Precision

The enthusiasm surrounding AI in medicine is largely driven by its ability to solve immediate, high-friction problems. Ambient AI assistants, which capture and summarize doctor-patient interactions, have been lauded for reducing physician burnout and allowing for more focused interpersonal engagement during visits. These tools provide a measurable improvement in operational workflow and clinician satisfaction, which serves as a powerful incentive for rapid institutional adoption. However, operational efficiency is not synonymous with clinical efficacy. A tool that saves a physician time is not inherently a tool that improves a patient's prognosis.

The structural challenge lies in the definition of success. Developers and hospital administrators often measure the success of an AI tool by its accuracy in transcribing notes or identifying patterns in a dataset. Yet, clinical medicine is a socio-technical system where the interaction between the physician, the patient, and the data is far more complex than a binary output. When a tool correctly identifies a medical anomaly in an X-ray, the true clinical value is only realized if the physician effectively integrates that information into a treatment plan that ultimately benefits the patient. If the tool is accurate but the clinician does not trust it, or if it disrupts the established diagnostic workflow in unforeseen ways, the potential benefit is nullified.

Mechanisms of Cognitive and Clinical Shift

The mechanism by which AI alters healthcare is not merely additive; it is transformative, potentially reshaping how medical professionals process information and interact with patients. Research into AI-assisted education suggests that these tools can influence cognitive processing, raising significant questions about the long-term impacts on medical training. If students and junior doctors begin to rely heavily on AI-driven summaries and diagnostic suggestions, there is a risk that they may lose the ability to critically synthesize complex patient data without algorithmic intervention. This shift could fundamentally change the nature of clinical intuition, a cornerstone of medical practice.

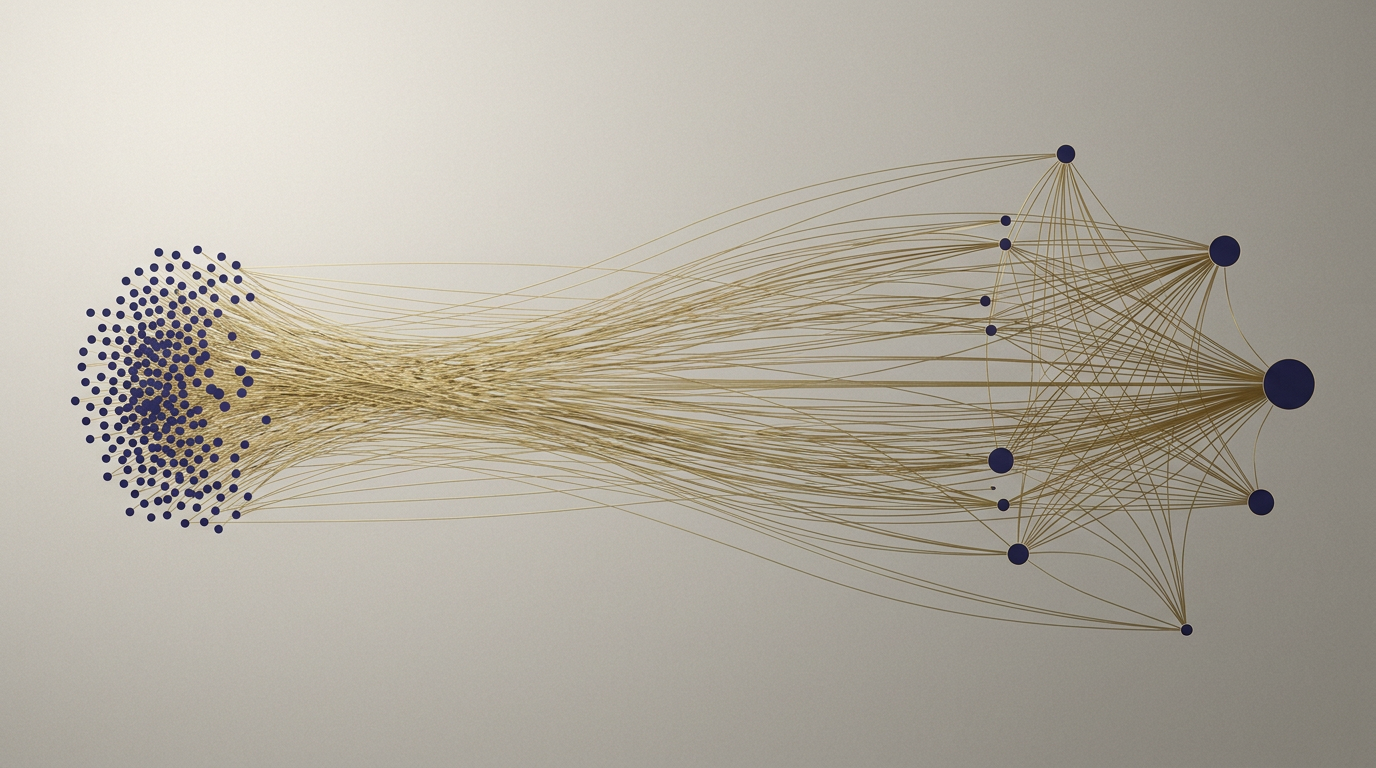

Furthermore, the integration of these tools creates a feedback loop that is difficult to audit. In a study published in early 2025 by researchers at the University of Minnesota, it was observed that a significant majority of U.S. hospitals were using AI-assisted prognostic tools. Crucially, however, only a fraction of these institutions had conducted rigorous evaluations to assess the accuracy of these systems, and even fewer had scrutinized them for potential bias. When hospitals implement these systems without localized validation, they risk importing biases inherent in the training data, which can lead to disparate treatment outcomes across different patient demographics. The reliance on vendor-provided metrics rather than independent, context-specific assessment creates a blind spot in the clinical decision-making process.

Implications for Stakeholders and Regulators

The implications of this unchecked expansion are far-reaching for all stakeholders involved. For regulators, the challenge is to define a framework that encourages innovation while mandating evidence of clinical utility. Current regulatory pathways often focus on the safety and accuracy of the software as a product, but they are less equipped to mandate long-term studies on how the software affects the actual health outcomes of a diverse patient population. For healthcare providers, the risk is that they may be held liable for decisions made in conjunction with AI tools that they do not fully understand, creating a new layer of medical-legal complexity.

Competitors in the health-tech space are currently locked in a race to capture market share, often prioritizing feature sets that appeal to hospital administrators looking for cost-saving measures. This competitive dynamic incentivizes speed over depth, leaving the patient to navigate a system where the diagnostic and treatment paths are increasingly influenced by unverified algorithms. The tension between the desire for efficient, scalable healthcare and the need for evidence-based practice is likely to become a defining conflict in the coming decade, necessitating a shift in how hospitals procure and deploy new technologies.

The Outlook for Evidence-Based AI

What remains uncertain is whether the healthcare industry can pivot from its current 'move fast' mentality toward a more deliberate, evidence-based approach to AI. The question is not whether AI has a place in medicine—it clearly does—but how that place is defined and validated. As adoption continues to accelerate, the absence of standardized protocols for evaluating clinical outcomes remains a significant vulnerability. Future developments will need to focus on longitudinal studies that track patient outcomes specifically in relation to the use of these tools, rather than relying on technical benchmarks alone.

Watchers of the sector should look for signs of increased regulatory scrutiny and the emergence of independent audit bodies dedicated to clinical AI validation. The goal should not be to halt the adoption of these technologies, but to reach a state of maturity where AI is treated as a medical intervention requiring the same level of scrutiny as a new drug or surgical procedure. The path forward involves a more cautious integration, one that treats algorithmic assistance as a supplement to, rather than a replacement for, rigorous clinical judgment.

As the integration of artificial intelligence into clinical practice continues to deepen, the industry faces a critical juncture. The promise of the technology is undeniable, yet the gap between technical output and clinical reality remains wide. Whether the healthcare sector can bridge this divide through more rigorous evaluation and a renewed focus on patient-centered outcomes, rather than just efficiency, remains the central question for the next generation of medical technology.

With reporting from MIT Tech Review Brasil

Source · MIT Tech Review Brasil