The AI Scaling Cliff Is Real—And Industry Leaders Know It

For the better part of five years, the dominant thesis in artificial intelligence held that bigger was better. More parameters, more data, more compute—each increment yielding predictable, measurable gains in model performance. That thesis is now under visible strain. In a wide-ranging conversation spanning roughly four hours, machine learning researchers Nathan Lambert of the Allen Institute for AI (AI2) and Sebastian Raschka laid out a landscape in which the governing mathematics of AI progress—known as scaling laws—are delivering diminishing returns, even as the geopolitical stakes around the technology continue to escalate.

The structure of the discussion itself is telling. Nearly an hour was devoted to scaling laws and training methodologies before the conversation moved to post-training techniques, alternative architectures, monetization strategies, and acquisition scenarios. That progression mirrors the shifting center of gravity within the field: the era of straightforward, brute-force scaling is giving way to something more complex and less certain.

From Brute Force to Precision Engineering

Scaling laws, first formalized in influential research from OpenAI around 2020, described power-law relationships between a model's size, the volume of training data, and the compute budget on one hand, and the model's loss—a proxy for performance—on the other. For several years, these relationships held with remarkable consistency. Each new generation of large language models appeared to confirm the thesis: spend more, get more.

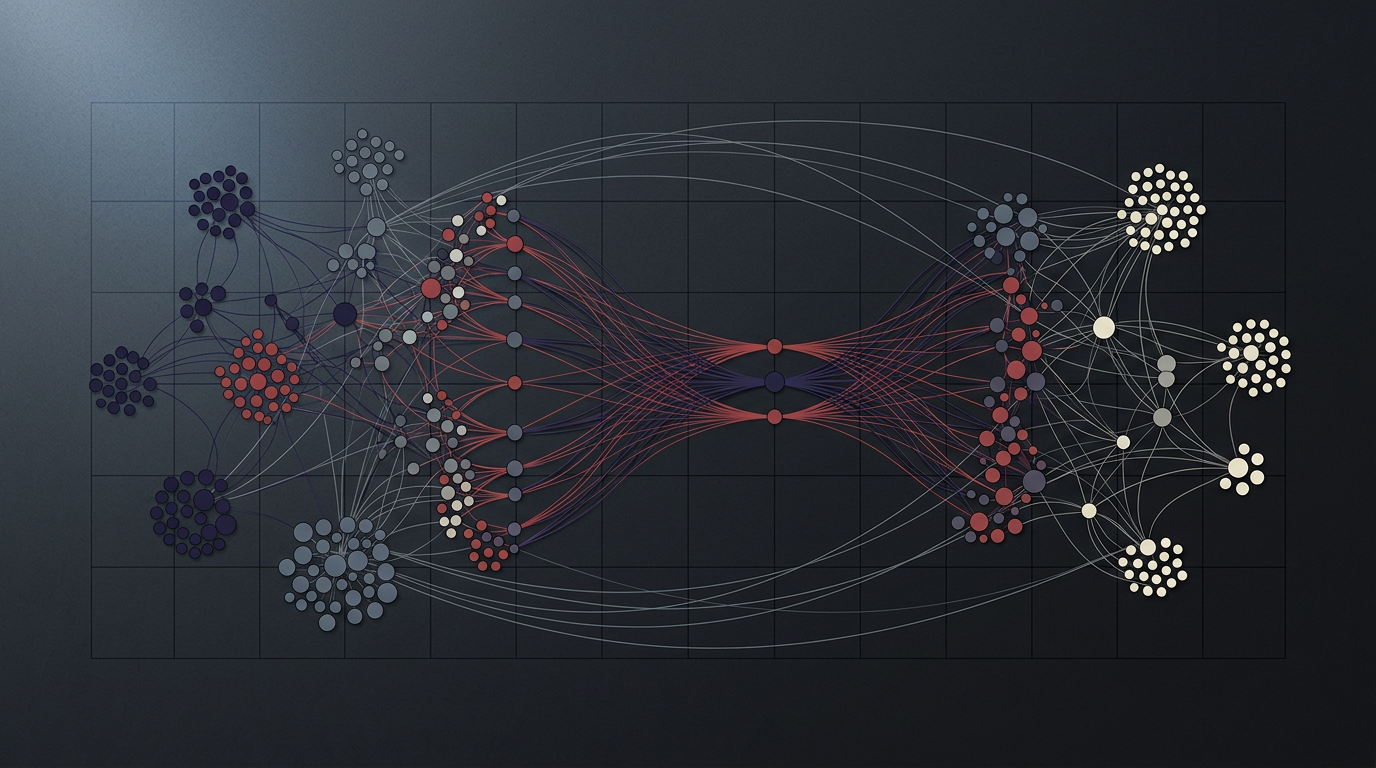

The conversation between Lambert and Raschka suggests that this reliability is eroding. Pre-training—the initial, compute-intensive phase in which a model learns statistical patterns across vast corpora of text—is no longer the primary bottleneck to capability. Instead, the frontier has shifted to post-training: the set of techniques applied after a model's base training, including reinforcement learning from human feedback (RLHF), instruction tuning, and other alignment methods designed to make raw language models useful and safe.

This shift carries significant implications. Post-training is less about scale and more about craft—careful data curation, reward model design, and iterative refinement. It resembles precision engineering more than industrial expansion. For an industry that has organized itself around the logic of exponential scaling—raising ever-larger capital rounds to build ever-larger compute clusters—the transition demands a different set of competencies and, potentially, a different economic model.

The discussion of work culture within leading AI labs reinforces the sense of an industry under compression. References to seventy-two-hour-plus work weeks and Silicon Valley insularity point to organizations racing against both technical limits and competitive clocks. Companies are committing billions of dollars to infrastructure and architectures whose long-term viability remains uncertain.

Geopolitics and the Hardware Bottleneck

The technical inflection point does not exist in isolation. Chinese AI development, operating under a distinct set of constraints—restricted access to the most advanced semiconductor hardware due to U.S. export controls, but potentially fewer regulatory limitations on data collection and usage—represents a parallel path that could diverge meaningfully from Western approaches. The result is not a single race but two overlapping competitions: one for technical capability, another for strategic positioning that extends into national security.

Hardware sits at the intersection of both. NVIDIA's dominance in AI accelerators, the logistics of assembling large compute clusters, and the nascent exploration of alternative chip architectures all featured in the conversation. AI progress has become infrastructure-constrained in ways that recall earlier technology cycles—the buildout of fiber-optic networks in the late 1990s, or the semiconductor capacity races of the 1980s. Access to scarce physical resources now matters as much as, and sometimes more than, algorithmic ingenuity.

Perhaps the most revealing thread in the discussion was the progression from AGI timelines to monetization strategies to acquisition scenarios. That sequence suggests an industry simultaneously reaching for transformative breakthroughs and hedging against the possibility that such breakthroughs may not arrive on schedule. The questions that seemed straightforward in 2022—how soon, how capable, how profitable—have fractured into a web of contingencies.

What remains is a set of forces in open tension: the mathematical limits of scaling against the capital already committed to it; the promise of post-training refinement against its unproven economics at scale; the competitive pressure between nations against the hardware chokepoints that constrain both. How these forces resolve—and in what order—will shape not only the next generation of AI systems but the structure of the industry building them.

With reporting from Lex Fridman.

Source · Lex Fridman