Workhuman, an employee management platform, recently unveiled an artificial intelligence tool titled Future Leaders, designed to identify high-potential employees years before they reach senior management positions. According to company reporting, the tool utilizes historical data to reverse-engineer the characteristics of successful leaders, claiming an accuracy rate of approximately 80% in retrospective testing. By analyzing patterns of behavior and responsibility, the system aims to provide managers with a predictive map of their workforce’s future potential, ostensibly reducing the high failure rates associated with executive appointments.

This development arrives at a time when the integration of AI into human resources has moved from experimental to commonplace, with recent surveys suggesting that a significant majority of managers now leverage automated tools for promotion decisions. However, the introduction of predictive modeling into the delicate ecosystem of career advancement represents a shift toward quantifying the unquantifiable. While the objective is to optimize talent allocation and mitigate the costs of poor leadership, the reliance on algorithmic forecasting raises fundamental questions about how organizations define leadership and the potential for systemic bias in the workplace.

The Quantification of Leadership Potential

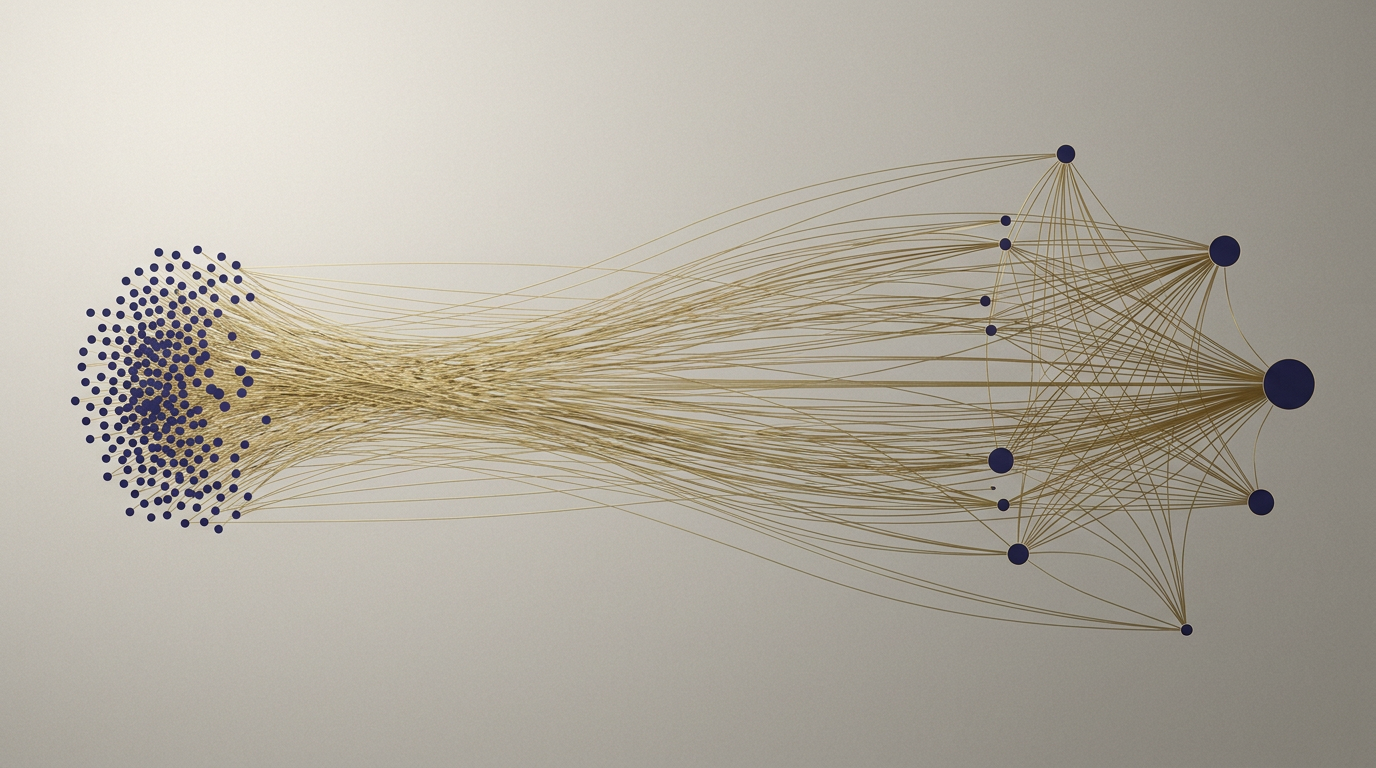

Historically, the process of identifying future leaders has been an amalgamation of performance metrics, mentorship, and subjective managerial intuition. This process, while inherently flawed due to human cognitive biases, has served as the bedrock of organizational culture. By attempting to distill "strategic trust" and other intangible qualities into data points, platforms like Future Leaders seek to replace this informal assessment with a structured, data-driven framework. The logic is that by training models on large datasets of successful leaders, companies can identify patterns that human recruiters might otherwise overlook.

Yet, this approach assumes that the future of an organization will resemble its past. If an AI is trained on historical data, it naturally inherits the structural biases of the previous generation of leaders. If past promotion cycles favored specific personality types, communication styles, or career paths, the algorithm will likely prioritize those same traits, effectively ossifying existing corporate hierarchies. The danger lies in the creation of a feedback loop where the AI identifies candidates who look like the leaders of yesterday, thereby stifling the diversity of thought and experience necessary for organizational adaptation.

The Mechanism of Algorithmic Management

At the core of these tools is the concept of pattern recognition applied to human behavior. When the system explains a promotion decision by citing "strategic trust," it is essentially performing a sophisticated form of correlation analysis. It identifies that individuals who are given certain responsibilities are more likely to be promoted, and then flags others who are being given similar responsibilities. While this provides a degree of transparency—a significant improvement over the "black box" of traditional executive appointments—it also risks reducing a human career to a set of predictive signals.

This mechanism creates a new dynamic between managers and their teams. If an AI is constantly assessing who is on a "leadership track," the environment may become hyper-optimized, with employees performing tasks specifically designed to trigger positive signals in the software. This is the gaming of the system in its most literal sense. When the metrics become the target, they cease to be good measures of actual leadership potential. Furthermore, if managers begin to defer to the machine’s judgment, they may lose the ability to cultivate talent through the mentorship and risk-taking that often define great leaders but cannot be easily captured in a dataset.

Stakeholders and the Future of Meritocracy

For regulators and corporate boards, the rise of AI-driven promotion tools presents a complex challenge regarding accountability. If an algorithm systematically overlooks certain demographics or fails to account for non-traditional career paths, the liability remains with the employer. The shift toward automated management tools necessitates a higher standard of algorithmic auditability, ensuring that these systems do not inadvertently codify discriminatory practices under the guise of objective data analysis. For the individual employee, the tension is between the desire for a meritocratic, data-backed career path and the fear of being sidelined by a system that fails to understand the nuance of their specific contributions.

Competitors in the HR tech space will likely follow suit, leading to a market saturation of predictive tools. This will force organizations to distinguish between tools that provide genuine insights and those that merely provide the illusion of control. The risk for the consumer—in this case, the corporation—is the over-reliance on technology that promises to solve the most human of problems: assessing character and potential. As these tools become more sophisticated, the role of the human manager may shift from being a talent scout to being an algorithmic overseer, tasked with validating, rather than discovering, the next generation of leaders.

The Limits of Predictive Analytics

What remains uncertain is whether these tools can truly account for the "black swan" events or the disruptive leadership qualities that do not fit established patterns. An AI may be excellent at identifying the next version of a current VP, but it may struggle to recognize the unconventional thinker who could steer the company through a period of radical change. The reliance on historical datasets inherently limits the model's ability to account for shifts in business strategy, market conditions, or the evolving definitions of what a leader should be in a post-AI world.

As organizations continue to integrate these systems, the industry should watch for whether the reliance on AI leads to a homogenization of leadership styles. If everyone is being coached to match the same "high-potential" pattern, the result may be a workforce that is efficient but lacks the creative friction that drives innovation. The question of whether an algorithm can ever truly understand the essence of leadership remains open, and for now, the most effective approach likely involves using these tools as a starting point for dialogue rather than an final arbiter of human potential.

Ultimately, the efficacy of these tools depends on the human capacity to challenge the machine's output. If managers treat the AI as a source of truth rather than a source of data, they risk losing the very intuition that made them leaders in the first place. As these technologies continue to evolve, the challenge for companies will be to balance the efficiency of data-driven insights with the essential, messy reality of human growth.

With reporting from Fast Company

Source · Fast Company