Google DeepMind has published details on Decoupled DiLoCo, a distributed training method designed to make large-scale AI model training more resilient and efficient across geographically dispersed and loosely connected hardware. According to the DeepMind blog, the approach builds on the earlier DiLoCo (Distributed Low-Communication) framework, extending it with a decoupled synchronization mechanism that tolerates failures and network instability during training runs.

The announcement arrives at a moment when the AI industry is grappling with the practical limits of scaling model training. As frontier models grow larger, the infrastructure required to train them — thousands of accelerators tightly synchronized over high-bandwidth interconnects — becomes both a bottleneck and a single point of failure. Decoupled DiLoCo represents DeepMind's attempt to loosen these constraints, and its implications reach well beyond Google's own data centers.

Rethinking Synchronization in Distributed Training

Conventional distributed training relies on frequent, tightly coordinated gradient synchronization across all participating devices. This approach works well within a single data center equipped with fast interconnects, but it scales poorly across multiple sites or heterogeneous hardware. A single node failure or network hiccup can stall or corrupt an entire training run, wasting compute time that, at frontier scale, translates into significant cost.

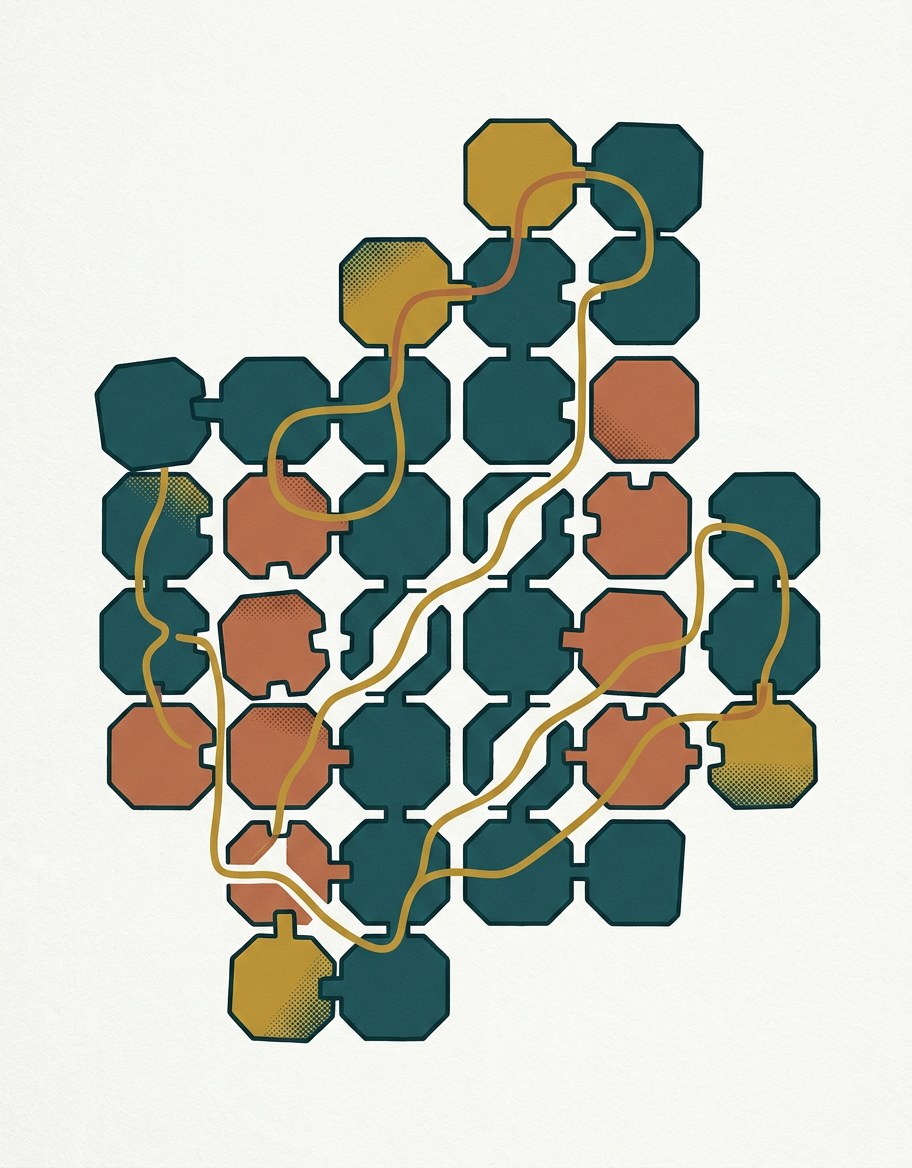

DiLoCo, the predecessor framework, addressed part of this problem by reducing the frequency of synchronization — allowing groups of workers to train semi-independently for longer intervals before merging their progress. Decoupled DiLoCo takes this further by removing the requirement that all worker groups synchronize at the same time. According to DeepMind's blog, this decoupling means that individual worker groups can proceed at their own pace, and the system can gracefully handle stragglers, partial failures, or uneven network conditions without halting the entire training process. The result is a training paradigm that is fundamentally more tolerant of the messy realities of large-scale distributed computing.

Implications for the Training Infrastructure Race

The significance of Decoupled DiLoCo extends beyond engineering elegance. Today, only a handful of organizations possess the concentrated compute infrastructure needed to train frontier models — massive clusters of tens of thousands of GPUs or TPUs connected by custom high-bandwidth fabrics. This concentration creates both economic and geopolitical chokepoints. A method that enables effective training across loosely coupled, geographically distributed hardware could, in principle, democratize access to large-scale model development.

That said, the gap between a promising research result and production-ready infrastructure is substantial. DeepMind's publication demonstrates the approach's viability, but questions remain about how Decoupled DiLoCo performs at the very largest model scales, how it interacts with different hardware configurations, and whether the convergence properties hold across diverse training regimes. There is also the question of whether competitors — and the broader open-source community — will adopt or adapt similar techniques. Meta's own distributed training research, and efforts from startups focused on decentralized compute, suggest that the appetite for fault-tolerant training methods is widespread. DeepMind's contribution may accelerate a broader shift in how the industry thinks about the relationship between hardware topology and training algorithms.

As AI training runs grow longer, more expensive, and more dependent on globally distributed resources, the resilience of the training process itself becomes a first-order concern. Decoupled DiLoCo offers one credible path forward, but whether it becomes the dominant paradigm — or merely one ingredient in a broader toolkit — will depend on how well it survives contact with the full complexity of production-scale workloads. For developers and infrastructure teams watching the frontier, the message is clear: the architecture of training is becoming as important as the architecture of the models themselves.

With reporting from DeepMind Blog

Source · DeepMind Blog